The difference between a successful platform and a ghost town often comes down to one algorithm: the recommendation system. Netflix estimates its recommendation engine saves over 1 billion USD annually by reducing churn. TikTok built a global empire not on social graphs, but on an algorithmic feed that knows you better than you know yourself.

But building a system that scales to 100 million users while returning results in under 200 milliseconds is a massive engineering challenge. It requires balancing mathematical rigor with heavy infrastructure constraints.

This guide moves beyond simple collaborative filtering tutorials. We will design a production-grade recommendation system capable of serving video content at the scale of YouTube or Netflix. We will cover the "funnel" architecture, vector databases, two-tower neural networks, and the critical trade-offs that senior engineers face daily.

1. Clarifying Requirements

In a system design interview or a real-world scoping meeting, you never start coding immediately. You start by defining the boundaries.

Interviewer: "Design a recommendation system for a video streaming platform."

Candidate: "Let's narrow the scope. Are we designing the homepage feed (discovery) or the 'Up Next' sidebar (related content)?"

Interviewer: "Focus on the homepage feed. We want users to discover new content they'll love."

Candidate: "What is the scale? Are we a startup or a tech giant?"

Interviewer: "Assume we are at scale. 100 million daily active users (DAU) and 10 million videos."

Candidate: "What are the latency constraints? Does the feed update in real-time as I click things?"

Interviewer: "Latency must be under 200ms. The feed should refresh near real-time, but slightly delayed updates (minutes) are acceptable for user profiles."

Candidate: "How do we handle new users with no watch history?"

Interviewer: "Good question. We need a fallback strategy for cold start scenarios."

System Requirements Summary

Functional Requirements:

- Generate Feed: Users see a personalized list of videos upon login.

- Record Interactions: System tracks clicks, likes, watches, and "not interested" signals.

- Handle Cold Start: System must handle new users and new videos gracefully.

Non-Functional Requirements (The Numbers):

- DAU: 100 Million.

- Content Library: 10 Million videos.

- QPS (Queries Per Second): Assuming 5 visits/user/day roughly distributed, peak traffic could hit ~50,000 QPS.

- Latency: P99 latency < 200ms.

- Availability: 99.99% (The feed must always load, even if recommendations are slightly stale).

- Storage:

- Metadata: 10M videos × 1KB ≈ 10GB (Small).

- Interaction Logs: 100M users × 50 events/day × 100 bytes ≈ 500GB/day. This is the heavy lifter.

Interview Tip: Always calculate QPS and storage on the whiteboard. For 500GB/day, you immediately know you need a distributed log system like Kafka, not a direct database write.

2. High-Level Architecture

At 50,000 QPS, you cannot simply query a database for "videos user X likes." You need a decoupled architecture that separates Online Serving (fast) from Offline Training (slow). Many production systems also add a Nearline Layer between the two — processes that run in minutes (not milliseconds or hours). For example, updating user embeddings from Kafka events every few minutes via Flink or Spark Streaming, so recommendations reflect recent behavior without the latency cost of true real-time inference.

Understanding the Architecture:

The diagram shows three distinct layers that work together to serve recommendations in under 200ms:

Online Serving Layer (Top Row): The request flows left-to-right through five components:

- Client App sends a request for personalized recommendations

- API Gateway handles authentication, rate limiting, and request validation

- Rec Service (Recommendation Service) orchestrates the entire flow—it doesn't compute recommendations itself but coordinates between other services

- Inference serves the ML models (both retrieval and ranking)

- Vector DB (Faiss/Milvus) stores pre-computed video embeddings for fast similarity search

Data Storage Layer (Middle Row):

- Kafka captures all user events (clicks, watches, scrolls) in real-time

- PostgreSQL stores structured metadata (user profiles, video details)

- Redis acts as the Feature Store—pre-computed features ready for instant lookup during inference

Offline Training Layer (Bottom Row): This runs daily/weekly, not during user requests:

- Data Lake (S3/HDFS) stores historical event logs

- Spark processes logs into training features

- ML Training trains the recommendation models on GPU clusters

- Model Registry versions and stores trained models, which are then deployed to the Inference service

Key Data Flows:

- User actions flow from Client → Kafka → Data Lake (for training)

- Trained models flow from Model Registry → Inference (for serving)

- Pre-computed features flow from Spark → Redis (for real-time lookup)

The Recommendation Service is the brain. It doesn't do the heavy lifting itself; it orchestrates the retrieval and ranking steps.

3. Data Model & Storage

Data is the fuel for recommendation engines. We need to store three types of data efficiently.

Understanding the Data Model:

The diagram shows the three core entities and their relationships:

User Data (Left):

- Primary key:

user_id - Fields: demographic information (age, location, language, device preferences)

- Storage: PostgreSQL (~10GB total for 100M users)

- Why PostgreSQL? User profiles are read-heavy with occasional updates—perfect for relational databases

Interaction Logs (Center):

- Primary key:

event_id - Fields:

event_type(click, watch, like, skip),timestamp,context - Storage: Kafka for ingestion → S3/Parquet for long-term storage

- Volume: ~500GB/day at our scale (100M users × 50 events/day × 100 bytes)

- This is the heaviest data and powers all model training

Video Data (Right):

- Primary key:

video_id - Fields: title, tags, duration, uploader, upload_time

- Storage: PostgreSQL (~10GB for 10M videos)

- Updated infrequently (video metadata rarely changes)

The Relationships:

- User 1:N Interaction — One user generates many interactions

- Interaction N:1 Video — Many interactions point to each video

This schema enables two critical queries:

- "What has this user interacted with?" (personalization)

- "Which users interacted with this video?" (collaborative filtering)

1. User & Video Metadata (Relational)

We need fast random access to profile data and video details. Database: PostgreSQL or Cassandra (if write-heavy).

User: user_id, age, location, language, device_type.Video: video_id, title, tags, duration, uploader_id, upload_time.

2. User Interaction Logs (Time-Series / Stream)

Every click, scroll, and hover is a signal. Storage: Apache Kafka (ingestion) → Parquet on S3 (long-term).

{

"event_id": "evt_98765",

"user_id": "u_12345",

"video_id": "v_555",

"event_type": "watch_75_percent",

"timestamp": 1672531200,

"context": {"connection": "4g", "device": "iphone13"}

}

3. Derived Features (Key-Value Store)

During inference, we can't compute "average watch time last 30 days" on the fly. We pre-compute these features. Storage: Redis or Cassandra.

- Key:

u_12345 - Value:

{"avg_watch_time": 450s, "top_genre": "scifi", "recent_clicks": [...]}

Common Pitfall: Beginners often query the raw interaction logs during the recommendation request. This kills latency. Always use a Feature Store (like Redis) with pre-aggregated stats for real-time inference.

Understanding the Feature Store:

The Feature Store solves a critical problem: you cannot compute "user's average watch time over 30 days" during a 200ms request. Instead, you pre-compute features offline and serve them instantly.

Offline Path (Left Side):

- Data Lake stores all historical events

- Spark/Flink runs batch jobs to compute aggregate features (e.g., "user watched 45% sci-fi content last month")

- Offline Store (Parquet/Hive) stores these features for model training

- ML Training uses these features to train models

Sync Process (Center):

- Spark pushes computed features to Redis hourly

- This ensures the online store has fresh features without real-time computation

Online Path (Right Side):

- User Request arrives with

user_id - Redis looks up pre-computed features in <5ms

- Features (avg_watch_time, top_genre, recent_clicks) are returned

- Inference uses these features to run the model

Why This Matters:

- Computing features in real-time: ~500ms (too slow)

- Looking up pre-computed features: ~5ms (acceptable)

- The Feature Store trades storage for latency

4. The Funnel Architecture (Serving Pipeline)

This is the most critical concept in recommendation system design. You cannot rank 10 million videos for every user request—it's computationally impossible. Instead, we use a multi-stage funnel.

Stage 1: Retrieval (Candidate Generation)

Goal: Quickly eliminate 99.99% of irrelevant videos. Method: Collaborative Filtering, Matrix Factorization, or Two-Tower Embeddings. Output: ~500 raw candidates.

Stage 2: Ranking (Scoring)

Goal: Precision. Order the 500 candidates by probability of engagement. Method: Complex Deep Learning models (e.g., Wide & Deep, DLRM) using dense features. Output: Sorted list of 500 videos with scores.

Stage 3: Re-Ranking (Business Logic)

Goal: Optimization and Policy. Logic:

- Diversity: Don't show 10 cat videos in a row.

- Freshness: Boost newly uploaded content.

- Fairness: Ensure creator diversity.

- Deduplication: Remove videos already watched. Output: Final 10-20 videos sent to the user.

5. Core Algorithm: The Two-Tower Model

Modern retrieval uses "Two-Tower" Neural Networks to create embeddings. An embedding is a vector (a list of numbers, e.g., [0.1, -0.5, 0.8]) that represents the "essence" of a user or video.

Architecture

The Math

The similarity score is usually the dot product of the user vector and video vector :

To turn this into a probability (e.g., probability of a click), we often use the Sigmoid function:

In Plain English: The "Two Towers" effectively map users and videos into the same geometric space. The model learns to place a user close to the videos they will like. The dot product is just a mathematical ruler measuring "how aligned are these two vectors?" If the user vector points North and the video vector points North, the score is high. If they point in opposite directions, the score is low.

Interview Tip: Why two separate towers? Because the Video Tower can be run offline. We compute vectors for all 10M videos once and store them. The User Tower runs online (real-time) to capture their latest mood, producing a query vector to search against the stored video vectors.

Training: Loss Function & Negative Sampling

The model learns from positive pairs (user clicked video) and negative pairs (user did NOT click). Without negatives, the model would think every video is relevant.

Loss Function: Binary Cross-Entropy

Where:

- for positive interactions (clicks, watches)

- for negative samples

- = sigmoid function

- = dot product of user and item embeddings

In Plain English: The loss function penalizes the model when it predicts "high relevance" for videos the user ignored, and "low relevance" for videos the user loved. Over millions of examples, the model learns to distinguish good recommendations from bad ones.

Negative Sampling Strategies

| Strategy | Description | Pros | Cons |

|---|---|---|---|

| Random Negatives | Sample random videos as negatives | Simple, fast | Too easy—model doesn't learn edge cases |

| Hard Negatives | Use videos user almost clicked but didn't | Strong learning signal | Can destabilize training |

| In-Batch Negatives | Other users' positives become your negatives | Computationally efficient | Popularity bias (popular items over-sampled) |

| Mixed Strategy | Combine 80% random + 20% hard | Balanced learning | More complex to implement |

Interview Tip: When discussing negative sampling, mention that "in-batch negatives" is the industry standard at scale (used by Google, YouTube, Pinterest) because it's computationally free—you reuse the other positive examples in the same training batch as negatives.

Training Pipeline Architecture

How do embeddings get updated as user behavior changes?

Understanding the Training Pipeline:

This pipeline runs daily (incremental) and weekly (full retrain) to keep models fresh.

Data Pipeline (Top Row):

- Kafka ingests ~5 billion events per day from user interactions

- S3 (Raw Logs) stores events in compressed Parquet format

- Spark transforms raw logs into training features:

- Joins user features with video features

- Creates positive pairs (user clicked) and negative samples (user didn't click)

- Outputs training-ready datasets

- Training (GPU Cluster) trains the Two-Tower and ranking models

Model Deployment (Bottom Row):

- Model Artifacts are saved after training (weights, config)

- Validate runs offline metrics (AUC, NDCG) on held-out data

- Export Embeddings generates video vectors from the trained Video Tower

- Faiss Index builds a searchable index of 10M video embeddings

Timeline:

- Events → Features: 2-4 hours (Spark job)

- Features → Trained Model: 4-8 hours (GPU training)

- Model → Deployed Index: 1-2 hours (export + indexing)

- Total: ~8-14 hours for a full refresh

Key Decisions:

- User embeddings: Update frequently (hourly or real-time) to capture current session intent.

- Video embeddings: Update daily (content doesn't change as fast as user mood).

- Full retraining: Weekly, to incorporate new videos and decaying old patterns.

6. Deep Dive: Vector Search (The Retrieval Engine)

Once we have 10 million video vectors, how do we find the 500 closest ones to our user?

A standard loop for video in all_videos: compute_score() is too slow ().

We use Approximate Nearest Neighbor (ANN) algorithms.

Technology Choices

| Technology | Latency | Scale | Best For |

|---|---|---|---|

| Faiss (Meta) | <1ms | Billions | High-performance, self-hosted clusters. |

| ScaNN (Google) | <1ms | Billions | State-of-the-art accuracy/speed trade-off on CPUs. |

| Pinecone/Milvus | ~10ms | 100M+ | Managed services if you don't want to maintain infrastructure. |

How It Works (HNSW Index)

HNSW (Hierarchical Navigable Small World) builds a multi-layered graph. It's like a highway system for data.

- Start at the top layer (interstates) to get to the general neighborhood.

- Drop down to local roads to find the exact destination.

- This reduces search from to .

Real-World Example: Pinterest uses HNSW to search billions of images. When you click a photo, they convert it to a vector and find visually similar images in milliseconds using this graph traversal.

7. Ranking Architecture (The Precision Layer)

After retrieval gives us ~500 candidates, we need precision. The ranking model is heavier because it only runs on 500 items, not 10 million.

Model: Deep Learning Recommendation Model (DLRM) or Wide & Deep.

Understanding Wide & Deep:

Google's Wide & Deep model (2016) combines two complementary learning approaches in a single architecture:

Wide Component (Left Path): A simple linear model that takes sparse features and cross-product features as input. It excels at memorization — learning specific rules like "users who watched Video A also clicked Video B." Think of it as a lookup table of known patterns.

Deep Component (Right Path): A multi-layer neural network that takes dense embeddings as input. It excels at generalization — discovering new patterns like "Sci-Fi fans tend to enjoy Tech documentaries" even if that exact combination hasn't been seen before. The embedding layer compresses high-dimensional sparse features into dense 32-dimensional vectors.

Why Both? Memorization alone leads to overfitting on historical patterns. Generalization alone misses important specific associations. The combined output captures both.

- Wide Part: Memorizes specific interactions (e.g., "User 123 clicked Video 456"). Good for exceptions.

- Deep Part: Generalizes patterns (e.g., "Sci-Fi fans usually like Tech reviews"). Good for exploration.

Feature Crossing

We don't just feed raw data; we combine features.

Common Feature Engineering Examples:

| Feature Type | Raw Data | Engineered Feature | Why It Matters |

|---|---|---|---|

| Temporal | User timestamp | time_since_last_watch | User returning after 5 min acts differently than after 5 days. |

| Interaction | User History | category_affinity_score | "User watched 5 Sci-Fi videos in last 10 attempts" = 0.5 affinity. |

| Contextual | Device + Time | is_commuting | Short videos work better on mobile during 8am-9am. |

| Statistical | Video Clicks | ctr_at_position_k | Normalizes click rate by position bias. |

User_Country×Video_Language(Does the user speak the video's language?)Time_of_Day×Video_Category(Cartoons in the morning, Horror at night?)

Key Insight: The ranking stage is where you optimize for business objectives. You might have one model predicting "Probability of Click" (CTR) and another predicting "Probability of Completion" (CVR).

If you only optimize for clicks, you get clickbait. If you optimize for watch time, you get quality.

For a deeper dive into the statistical metrics behind these predictions, check out our guide on The Bias-Variance Tradeoff.

8. API Design

A well-designed recommendation API balances simplicity for clients with the diagnostic information engineers need for debugging and iteration.

Core Endpoints

Feed Recommendations:

GET /v1/recommendations/feed?user_id=u_12345&limit=10

Response:

{

"items": [

{

"video_id": "v_555",

"title": "System Design Interview Guide",

"thumbnail_url": "...",

"score": 0.98,

"source": "retrieval_algo_v2",

"tracking_token": "token_abc123"

},

...

],

"next_page_token": "page_2_xyz",

"latency_ms": 145

}

Key Design Decisions

| Field | Purpose | Why It Matters |

|---|---|---|

score | Relevance score (0-1) | Useful for debugging ranking issues |

source | Which algorithm generated this item | Helps track A/B test performance |

tracking_token | Opaque token linking this impression to model state | Critical for joining impressions to clicks |

next_page_token | Cursor for pagination | Avoids offset-based pagination problems |

latency_ms | Server-side latency | Client can log for performance monitoring |

Why These Fields Matter

The tracking_token Pattern:

When a user sees a recommendation, you need to record which model version, which features, and which ranking produced that specific item. Later, when the user clicks (or doesn't click), you join this event with the impression data. Without this join, you cannot train your models properly.

The source Field:

When running A/B tests or using multiple retrieval algorithms, knowing which algorithm produced each recommendation lets you:

- Debug why certain items are showing up

- Measure per-algorithm performance

- Roll back specific algorithms without affecting others

Interview Tip: Always include a tracking_token in the response. When the user clicks the video, the client sends this token back. This allows the backend to join the "Click" event with the exact model version and features that generated the recommendation, which is crucial for debugging and training.

Related Endpoints

Most recommendation systems also need these additional endpoints:

POST /v1/events/impression- Log when items are shown to usersPOST /v1/events/click- Log when items are clickedGET /v1/recommendations/similar?item_id=v_555- Similar item recommendationsGET /v1/recommendations/search?query=ml+tutorials- Search with personalization

9. Cold Start Handling

The cold start problem is one of the most common interview questions for recommendation systems. It occurs when we have no interaction history for a user or an item.

The Decision Tree Logic:

- Is the User New? If yes, we can't use history. We fallback to Geographic Popularity (what's trending in their city) or Demographic Groups (if age/gender is known).

- Is the Item New? If yes, it has no interaction data. We rely on Content-Based Filtering (using BERT to analyze video title/description) or Bandits (force-showing it to a few users to gather data).

Understanding the Cold Start Decision Flow:

The diagram shows the decision tree that runs for every recommendation request:

Step 1: User Check When a request arrives with user context, the first question is "Is this user new?" The system checks if there's any interaction history.

If User is New (Top Branch): Two fallback strategies kick in:

- Popularity: Show globally trending content that most users enjoy

- Demographics: Use region/language to find similar users and show what they watch

Step 2: Item Check For users with history, the next question is "Are we considering new items?" Items without engagement data need special handling.

If Item is New (Middle Branch):

- Content-Based: Use the video's title, tags, and thumbnail to create embeddings—no user interaction needed

- Exploration Budget: Reserve 5% of impressions for new content to gather initial signals

Standard Path (Bottom): If both user and item have history, use the full Two-Tower pipeline with collaborative filtering.

The Key Insight: Cold start isn't one problem—it's a decision tree with different solutions at each branch.

New User Strategies

| Strategy | How It Works | Pros | Cons |

|---|---|---|---|

| Global Popularity | Show trending/most-watched videos | Simple, always works | No personalization |

| Demographic Similarity | Find similar users by age/location | Some personalization | Privacy concerns |

| Onboarding Quiz | Ask explicit preferences | High-quality signal | User friction |

| Exploration | Show diverse content, learn fast | Builds profile quickly | Initial experience may be poor |

New Item Strategies

| Strategy | How It Works | Pros | Cons |

|---|---|---|---|

| Content Features | Use title, tags, thumbnail embeddings | Immediate availability | Ignores taste |

| Creator Transfer | New video inherits creator's audience | Leverages existing data | Unfair to new creators |

| Freshness Boost | Temporarily boost new content score | Ensures visibility | May show low-quality content |

| Exploration Budget | Reserve 5-10% of impressions for new items | Gathers signal fast | Slight quality hit |

Interview Tip: When asked about cold start, don't just say "use popularity." Show you understand the trade-off: popularity works but creates a "rich get richer" problem. Mention exploration/exploitation (Multi-Armed Bandits, Thompson Sampling) to show depth.

Exploration vs Exploitation: The Core Trade-off

The cold start interview tip above mentions exploration/exploitation — this concept is so important it deserves its own discussion.

Understanding the Trade-off:

Every recommendation is a choice: show what you know the user likes (exploit) or show something new to learn their preferences (explore). Pure exploitation creates filter bubbles. Pure exploration feels random.

The Three Main Strategies:

| Strategy | How It Works | When to Use |

|---|---|---|

| Epsilon-Greedy | Show the best item (1-epsilon)% of the time; random item epsilon% of the time | Simple baseline; works well when epsilon = 5-10% |

| UCB (Upper Confidence Bound) | Score = estimated_reward + uncertainty_bonus. Items with fewer impressions get a higher bonus. | When you need deterministic exploration |

| Thompson Sampling | Sample from each item's reward distribution. Items with high uncertainty get sampled more often. | Best theoretical guarantees; production-proven at Spotify, Netflix |

Thompson Sampling in Practice:

For each candidate item, maintain a Beta distribution of its click probability:

- Start with Beta(1, 1) — uniform prior (no opinion)

- After each click: Beta(alpha + 1, beta)

- After each skip: Beta(alpha, beta + 1)

- To rank: sample from each item's distribution and sort

Items with few impressions have wide distributions (high variance), so they occasionally sample high values and get shown — this is exploration. Items with many impressions have narrow distributions centered on their true CTR — this is exploitation.

Interview Tip: If asked "How do you balance exploration and exploitation?", mention Thompson Sampling by name. It's used at Netflix, Spotify, and LinkedIn in production. The key advantage over epsilon-greedy is that it explores intelligently — items that might be good get explored more than items that are likely bad.

10. Evaluation Metrics

You cannot improve what you cannot measure. Recommendation systems require BOTH offline and online metrics.

Offline Metrics (Before Deployment)

These are computed on historical data before pushing a model to production.

| Metric | Formula | What It Measures | Target |

|---|---|---|---|

| Precision@K | (Relevant in Top K) / K | Of the K shown, how many were relevant? | > 0.3 |

| Recall@K | (Relevant in Top K) / Total Relevant | Of ALL relevant items, how many did we find? | > 0.5 |

| NDCG@K | DCG / IDCG | Ranking quality (rewards good items at top positions) | > 0.7 |

| AUC | Area Under ROC | Click prediction accuracy | > 0.8 |

Online Metrics (A/B Testing in Production)

These are the real business metrics measured after deployment.

| Metric | What It Measures | Why It Matters |

|---|---|---|

| CTR | Click-through rate | Immediate engagement signal |

| Watch Time | Total minutes watched | Quality of recommendations |

| Completion Rate | % of videos watched to end | Content-match quality |

| D1/D7 Retention | Users returning after 1/7 days | Long-term platform health |

| Diversity | Unique categories in feed | Prevents filter bubbles |

Understanding the Metrics Hierarchy:

The diagram shows how different metrics relate and why you need all of them.

North Star Metric (Top):

- Watch Time is the primary metric for video platforms

- It represents genuine user value—users don't watch content they don't enjoy

- All other metrics should ultimately drive this number

Leading Indicators (Left - "Gameable"): These metrics move quickly but can be manipulated:

- CTR (Click-Through Rate): How often users click recommendations

- Completion Rate: What percentage of videos are watched to the end

Warning: Optimizing CTR alone leads to clickbait. High CTR with low completion means users clicked but didn't enjoy the content.

Lagging Indicators (Right - "Slow"): These metrics are the true measure of success but take time to measure:

- D7 Retention: Do users return after 7 days?

- Revenue/LTV: Long-term user value

The Balance: You need both types:

- Leading indicators give fast feedback for iteration

- Lagging indicators confirm you're building real value

Common Pitfall: Optimizing only for CTR leads to clickbait. Optimizing only for watch time leads to addictive content. The best systems use a weighted combination:

11. Trade-offs & Alternatives

Every design decision has a cost. Here are the major ones for this architecture.

Architectural Decisions

| Decision | Option A | Option B | We Chose | Why |

|---|---|---|---|---|

| Embedding Update | Real-time | Batch (Daily) | Hybrid | User embeddings update near real-time (to catch immediate interests), Video embeddings update daily (content changes slowly). |

| Database | SQL (Postgres) | NoSQL (Cassandra) | Both | Postgres for user metadata (ACID compliance), Cassandra/DynamoDB for interaction logs (massive write throughput). |

| Serving | Pre-compute (Cache) | On-the-fly | On-the-fly | Pre-computing fails for active users whose context changes fast. We only cache the "Head" (popular) queries. |

| Model Format | TensorFlow SavedModel | ONNX | ONNX | Framework-agnostic, works with Triton Inference Server, easier to optimize. |

| Vector DB | Self-hosted Faiss | Managed Pinecone | Faiss on K8s | Cost savings at scale. Pinecone gets expensive at 10M+ vectors. |

Model Architecture Trade-offs

| Approach | Latency | Accuracy | Cold Start | Interpretability |

|---|---|---|---|---|

| Matrix Factorization | Very Fast | Good | Poor | Medium |

| Two-Tower DNN | Fast | Very Good | Good | Low |

| Transformer (Sequential) | Slow | Excellent | Excellent | Very Low |

| Graph Neural Network | Medium | Very Good | Good | Low |

Interview Tip: When asked "why not use Transformers everywhere?", explain the latency constraint. Transformers are in sequence length. For retrieval over 10M items, even a small Transformer per item is prohibitive. We use Transformers only in ranking (500 items) or for encoding text features offline.

Case Study: Netflix vs. TikTok

| Feature | Netflix | TikTok |

|---|---|---|

| Context | "Lean Back" (TV, 2-hour movies) | "Lean Forward" (Mobile, 15s clips) |

| Signal Density | Low (1 movie decision per night) | Extremely High (100 swipes per session) |

| Exploration | Low Risk (Users stick to safe choices) | High Risk (Must show new viral trends instantly) |

| Architecture | Heavy reliance on long-term history | Heavy reliance on immediate session context (RNNs/Transformers) |

Netflix focuses on accuracy because starting a bad 2-hour movie is painful. TikTok focuses on speed and adaptation because skipping a bad 15-second video is costless.

12. Real-World Company Architectures

Theory is valuable, but seeing how industry leaders solve these problems at scale is invaluable. Let's examine four companies that have publicly shared details about their recommendation systems.

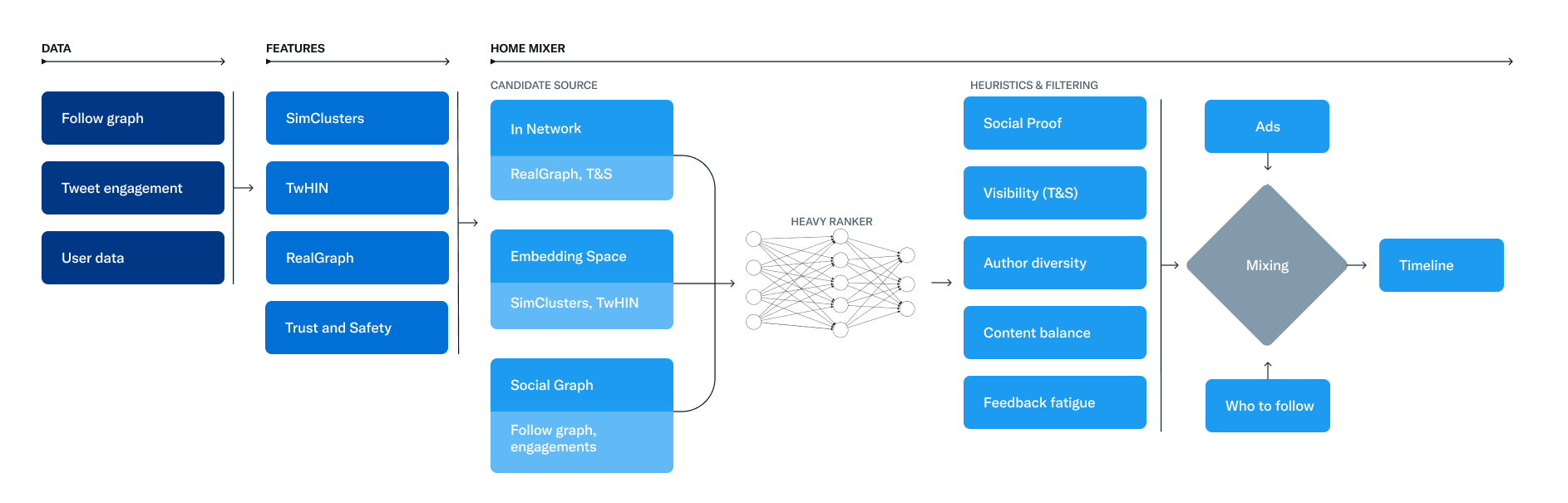

Twitter/X: The For You Timeline

In March 2023, Twitter open-sourced their recommendation algorithm, providing unprecedented insight into a production system serving hundreds of millions of users.

Twitter/X Recommendation Algorithm Architecture

Twitter/X Recommendation Algorithm Architecture

Source: Twitter Engineering Blog

Key Components:

| Component | What It Does | Scale |

|---|---|---|

| GraphJet | Real-time bipartite graph engine maintaining user-tweet interactions. Uses SALSA random walks to find relevant out-of-network tweets. | Serves ~15% of timeline tweets |

| SimClusters | Matrix factorization creating 145k community clusters. Users and tweets are embedded into this community space. | Updated every 3 weeks |

| TwHIN | Twitter's Heterogeneous Information Network embeddings for content understanding | Billions of edges |

| Heavy Ranker | 48 million parameter neural network optimizing for Likes, Retweets, and Replies | Scores ~1,500 candidates per request |

The Funnel in Practice:

- Candidate Sources generate ~1,500 tweets from In-Network (people you follow) and Out-of-Network (GraphJet, SimClusters)

- Heavy Ranker scores all candidates with the 48M-param model

- Heuristics & Filters apply business rules (diversity, author limits, content policy)

- Mixing blends different candidate sources into the final timeline

Interview Insight: When Twitter engineers mention "In-Network" vs "Out-of-Network," they're distinguishing between tweets from accounts you follow (easy) versus discovering tweets from accounts you don't follow (hard). The hard part—Out-of-Network—is where GraphJet and SimClusters shine.

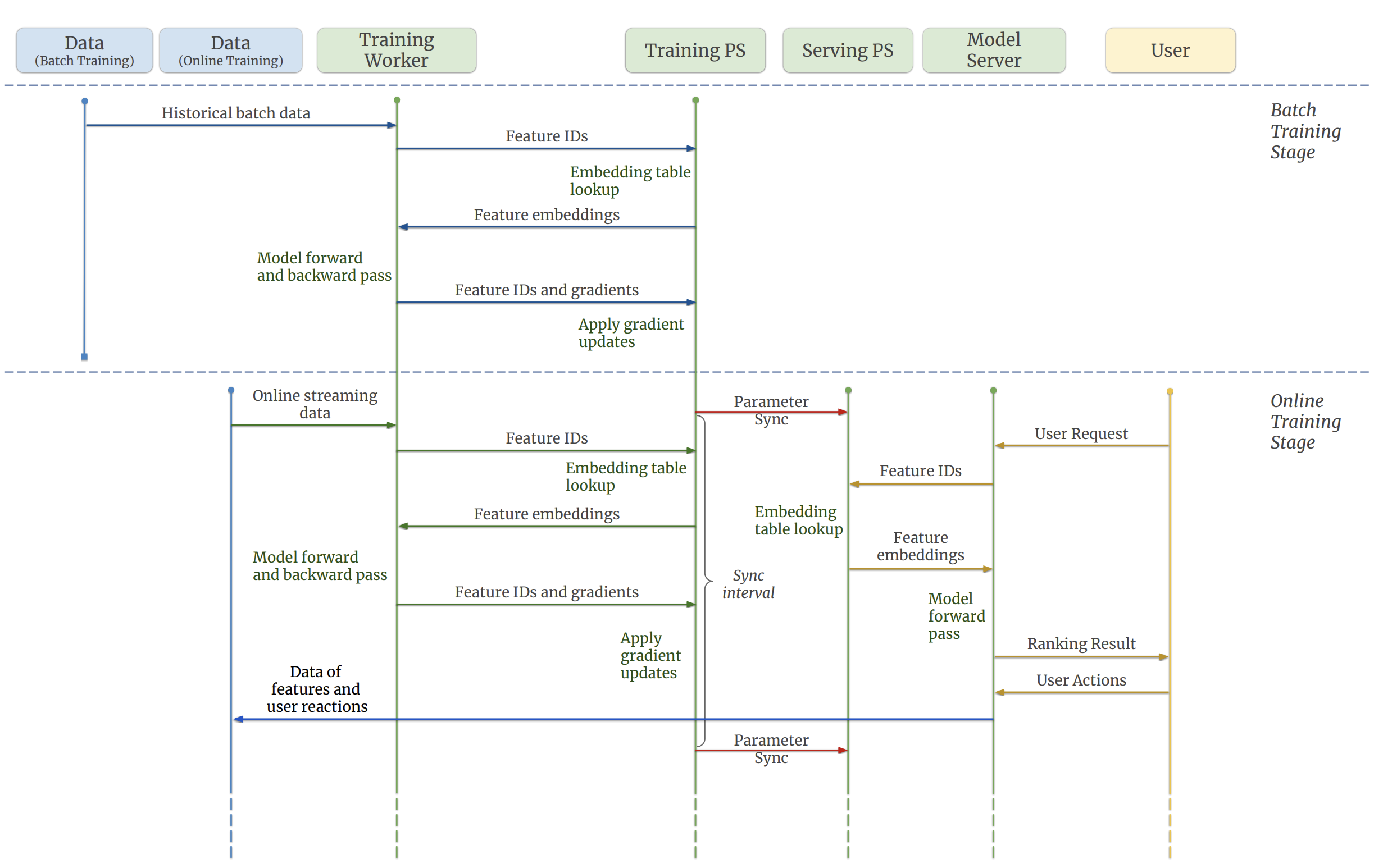

TikTok/ByteDance: Monolith

TikTok's recommendation system is arguably the most sophisticated in the world. Their 2022 paper "Monolith: Real Time Recommendation System With Collisionless Embedding Table" reveals key innovations.

TikTok Monolith Online Training Architecture

TikTok Monolith Online Training Architecture

Source: Monolith arXiv Paper - Figure 1

The Problem TikTok Solved:

Traditional recommendation systems have a fatal flaw: the model serving predictions was trained hours or days ago. User interests shift in minutes on TikTok. If you suddenly start watching cooking videos, the old model doesn't know.

Monolith's Solution: Real-Time Training

Key Innovations:

| Innovation | How It Works | Why It Matters |

|---|---|---|

| Collisionless Embedding Table | Uses Cuckoo Hashing instead of standard hash tables. Each ID gets a unique embedding without collisions. | Eliminates quality degradation from hash collisions at scale |

| Dual Parameter Servers | Training-PS learns from live data; Serving-PS handles inference. They sync every few minutes. | Model adapts in near real-time |

| Expirable Embeddings | Old user/video embeddings automatically expire after N days | Keeps memory bounded, removes stale data |

| Frequency Filtering | Users must have X logins, videos must have Y views before getting embeddings | Reduces noise, focuses on engaged users |

The Math Behind Cuckoo Hashing:

Standard embedding tables use hash(user_id) % table_size which causes collisions—two different users mapping to the same embedding. At TikTok's scale (billions of users), collisions degrade recommendation quality.

Cuckoo hashing uses two hash functions. If position h1(key) is occupied, the existing item is "kicked out" to its alternate position h2(key). This continues until everyone finds a home, guaranteeing no collisions.

Interview Insight: When asked "How would you make recommendations real-time?", mention TikTok's Training-PS / Serving-PS architecture with minute-level parameter synchronization. This shows you understand that "real-time" doesn't mean training on every click—it means fast feedback loops.

Pinterest: PinSage and Pinnability

Pinterest faces a unique challenge: their content is primarily visual, and user intent is often aspirational (planning a wedding, remodeling a kitchen) rather than immediate.

Source: PinSage KDD 2018 Paper

PinSage: Graph Neural Networks at Scale

Pinterest developed PinSage, a Graph Convolutional Network operating on a graph with 3 billion nodes and 18 billion edges—10,000x larger than typical GCN applications.

How PinSage Works:

- Graph Construction: Pins (images) and boards are nodes. Edges connect pins that appear on the same board.

- Random Walk Sampling: Instead of processing the entire graph, PinSage samples neighborhoods via random walks.

- Importance Pooling: Neighbors are weighted by visit frequency from random walks, not treated equally.

- Aggregation: Each pin's embedding is computed by aggregating its neighbors' features through neural network layers.

Pinnability: The Ranking Model

The Pinnability model predicts personalized pin relevance using:

- Pin Signals: PinSage embedding, visual features, text annotations

- User Signals: Long-term interests (yearly), short-term context (session-level)

- Context Signals: Time of day, device, surface (home feed vs search)

Dual Timeframe Architecture:

Pinterest separates user modeling into two distinct timeframes:

| Timeframe | Model | Updates | Captures |

|---|---|---|---|

| Long-term | Yearly Transformer predicting 14-day engagement | Weekly | Stable interests (home decor style, cuisine preferences) |

| Short-term | Session-level sequence model | Real-time | Immediate intent (currently planning a birthday party) |

Interview Insight: Pinterest's dual-timeframe approach is brilliant for interview discussions. It shows you understand that "user preferences" aren't monolithic—someone's decade-long love of minimalist design is different from their current obsession with planning a tropical vacation.

YouTube: Multi-Objective Optimization

YouTube's 2016 paper "Deep Neural Networks for YouTube Recommendations" introduced the two-tower architecture. Their 2019 follow-up introduced multi-objective optimization.

Source: Google Research RecSys 2016

The Problem with Single Objectives:

Early YouTube optimized purely for click-through rate (CTR). Result? Clickbait. Thumbnails promised drama that videos didn't deliver. Users clicked but didn't watch.

Then they optimized for watch time. Result? Addictive, often low-quality content that kept users watching but left them feeling worse.

The Solution: Multi-Objective Ranking

YouTube now uses Multiple Objectives with the MMoE (Multi-gate Mixture-of-Experts) architecture:

Understanding the MMoE Architecture:

The diagram above shows the key innovation: instead of a single neural network trying to predict everything, MMoE uses multiple expert sub-networks that each learn different patterns in the data. Two gating networks (one per task) learn which experts to weight for each objective.

- The Engagement Gate might weight Expert 1 and Expert 3 heavily (they learned CTR patterns)

- The Satisfaction Gate might weight Expert 2 and Expert 4 (they learned quality patterns)

- This allows shared learning (experts are shared) while task-specific specialization (gates are separate)

Objective Categories:

| Category | Metrics | What They Capture |

|---|---|---|

| Engagement | Clicks, Watch Time, Completion Rate | User attention (but not necessarily satisfaction) |

| Satisfaction | Likes, Shares, Survey Responses | User value (did they enjoy it?) |

| Quality | Authoritative sources, E-A-T signals | Content trustworthiness |

The MMoE Architecture:

Instead of a single neural network, MMoE uses multiple "expert" sub-networks, each specializing in different patterns. A gating network learns which experts to consult for each objective.

The weights (w₁, w₂, ...) are tuned based on business priorities and A/B test results.

Handling Position Bias:

Users tend to click items shown at the top regardless of relevance. YouTube adds a "shallow tower" that learns position bias separately, then subtracts it from the main prediction. This prevents the model from learning "position 1 is always good."

Interview Insight: If asked "How do you balance engagement vs quality?", reference YouTube's multi-objective approach. The key insight is that you cannot optimize for a single metric—you need a weighted combination, and the weights are a business decision informed by A/B tests.

13. Beyond Two-Tower: Sequential & Transformer Models

The Two-Tower architecture excels at capturing static preferences, but users aren't static. Their interests evolve within a single session. Sequential models capture this dynamic behavior.

The Limitation of Two-Tower

Two-Tower creates a single user embedding from their history. But consider:

- User watches: [Action Movie → Action Movie → Cooking Show → Cooking Show → Cooking Show]

- Two-Tower embedding: "50% action, 50% cooking"

- Reality: User clearly shifted from action to cooking!

SASRec: Self-Attentive Sequential Recommendation

SASRec (2018) applies self-attention (the mechanism behind Transformers) to sequential recommendation.

Architecture:

SASRec Pipeline:

- Input: User interaction sequence [item₁, item₂, item₃, ..., itemₙ]

- Embedding Layer: Item embeddings + positional encodings

- Self-Attention Blocks: L transformer layers

- Output: Prediction for next item (itemₙ₊₁)

Key Insight: Self-attention allows each position to attend to all previous positions, learning which past interactions are most relevant for predicting the next one. Recent items often matter more, but not always—a user might return to an interest from weeks ago.

Complexity Trade-off:

| Model | Complexity | Sequential Patterns | Long-Range Dependencies |

|---|---|---|---|

| Markov Chain | O(1) | Only last item | None |

| RNN/LSTM | O(n) | Yes | Weak (vanishing gradient) |

| SASRec (Transformer) | O(n²) | Yes | Strong |

BERT4Rec: Bidirectional Understanding

BERT4Rec (2019) adapts BERT's masked language modeling to recommendations.

Training Approach:

Instead of predicting the next item, BERT4Rec randomly masks items in the sequence and predicts them:

| Sequence | Content |

|---|---|

| Original | Movie A → Movie B → Movie C → Movie D → Movie E |

| Masked | Movie A → [MASK] → Movie C → [MASK] → Movie E |

| Task | Predict Movie B and Movie D from surrounding context |

Why Bidirectional?

SASRec only looks backward (causal attention). BERT4Rec looks both ways, understanding that Movie E might inform what Movie B should be. This captures richer patterns but requires careful handling at inference time.

When to Use Sequential Models

| Scenario | Best Approach | Rationale |

|---|---|---|

| Long-form content (Netflix movies) | Two-Tower + light sequence features | Users make few decisions; each is deliberate |

| Short-form content (TikTok, YouTube Shorts) | Full sequential model | Rapid interactions; intent changes fast |

| E-commerce browsing | Hybrid | Session intent matters, but so do long-term preferences |

| Search ranking | Mostly non-sequential | Query intent dominates session history |

Interview Tip: When discussing Transformers for recommendations, always mention the latency constraint. You might say: "We'd use a Transformer-based model like SASRec in the ranking stage (500 candidates) but not in retrieval (10M candidates) due to O(n²) complexity. For retrieval, we'd encode the sequence offline into a single query vector."

14. What Breaks in Production

Recommendation systems that work perfectly in offline evaluation often fail spectacularly in production. Here's what goes wrong and how to fix it.

System Failures & Solutions Matrix:

| Failure Mode | Symptom | Root Cause | Solution |

|---|---|---|---|

| Position Bias | Top results get 90% clicks | Users are lazy; trust the ranking implicitly | Shallow Tower: Learn bias as a feature and subtract it during serving. |

| Feedback Loop | User only sees Political News | Model over-optimizes for engagement on past clicks | Exploration Budget: Force 5% random/new content into feed. |

| Staleness | Recommendations feel "yesterday" | Embeddings not updating fast enough | Real-time Updates: Move User Profile to Feature Store (Redis). |

| Cold Start | New users bounce immediately | No history to base rank on | Heuristics: Serve "Global Best" or "Geographic Trending". |

Understanding Production Failure Modes:

The diagram shows four categories of problems and how they interconnect:

Bias Issues (Top Row): Three types of bias that corrupt recommendations:

- Position Bias: Items at position 1 get clicked regardless of quality—the model learns "top = good"

- Popularity Bias: Popular items get more impressions → more clicks → higher scores → even more impressions

- Echo Chambers: Users only see content similar to what they've seen before, narrowing their experience

The Feedback Loop (Middle): This is the core problem. Notice the circular flow:

- User clicks on recommendations

- Rec System uses those clicks to rank future items

- Training Data is updated with click history

- Cycle repeats → biases amplify over time

The arrows from the bias row show how each bias type feeds into this loop.

Operational Issues (Third Row): Technical problems that break systems in production:

- Feature Drift: User preferences change, but features computed yesterday don't reflect today

- Latency Issues: When the ML service times out, what fallback do you show?

- Cold Start: New users/items without data need special handling

Mitigation Strategies (Bottom Row): Solutions that feed back into the system:

- Debiasing: Techniques like IPW (Inverse Propensity Weighting) or position-aware models

- Diversification: Algorithms like MMR or DPP that force variety in results

- Monitoring: Drift detection to catch when model predictions diverge from reality

Position Bias

The Problem: Users click items at the top of the list regardless of relevance. Your model learns "position 1 = good" instead of "this item is relevant."

How to Detect: Randomly shuffle recommendations for a small percentage of traffic. If position 1's CTR drops dramatically while position 5's rises, you have position bias.

Solutions:

| Approach | How It Works | Trade-off |

|---|---|---|

| Position as Feature | Include position in training; zero it out at inference | Simple but imperfect |

| Inverse Propensity Weighting | Weight training examples by 1/P(position) | Theoretically sound; high variance |

| Shallow Tower (YouTube) | Separate network learns position effect; subtract from main prediction | More complex; very effective |

Popularity Bias (The Rich Get Richer)

The Problem: Popular items get more impressions → more clicks → higher predicted scores → more impressions. New and niche content never surfaces.

How to Detect: Track coverage metrics—what percentage of your catalog gets recommended? If 1% of items get 90% of impressions, you have a problem.

Solutions:

| Approach | How It Works | Trade-off |

|---|---|---|

| Exploration Budget | Reserve 5-10% of impressions for random/new items | Slight quality hit; gathers data |

| Popularity Penalty | Subtract α × log(popularity) from scores | Helps niche items; may hurt user experience |

| Multi-Armed Bandits | Thompson Sampling balances exploration/exploitation dynamically | More complex; theoretically optimal |

| Creator-Side Fairness | Ensure minimum exposure for all creators meeting quality bar | Policy decision; may reduce engagement |

Feedback Loops (Echo Chambers)

The Problem: Model recommends X → User engages with X (it was shown!) → Model learns "user likes X" → Recommends more X. The user never sees alternatives.

Example: User watches one political video → Algorithm shows more → User's feed becomes entirely political, regardless of their actual breadth of interests.

Solutions:

| Approach | How It Works | Trade-off |

|---|---|---|

| Diversity Constraints | Force top-K to include N different categories | May feel random to users |

| Counterfactual Training | Train on "what would user do if shown Y instead of X?" | Requires causal inference; complex |

| Periodic Exploration | Occasionally reset user profiles or inject diverse content | Disrupts personalization |

Cold Start Edge Cases

Even with cold start strategies, edge cases break systems:

New User + New Item: Neither has history. Fall back to global popularity, but this creates homogeneous experiences.

Returning User After Long Absence: Their old preferences may be stale. TikTok's expirable embeddings address this—old data automatically ages out.

Seasonal Interests: User searches for "Halloween costumes" every October. Simple models don't capture this periodicity.

Feature Drift and Staleness

The Problem: User embeddings computed from yesterday's data don't reflect today's interests. Video embeddings don't update when engagement patterns change.

How to Detect: Monitor prediction calibration over time. If P(click) = 0.1 predictions have actual CTR of 0.05 after a week, your features are drifting.

Solutions:

| Component | Update Frequency | Rationale |

|---|---|---|

| User short-term features | Real-time (Kafka → Feature Store) | Session intent changes fast |

| User long-term embeddings | Hourly | Stable but should reflect recent activity |

| Item embeddings | Daily | Content doesn't change; engagement patterns do |

| Model weights | Weekly full retrain + daily incremental | Balance freshness vs stability |

Production Wisdom: The most common production issue isn't algorithmic—it's data pipeline failures. A broken Kafka consumer means stale features. A failed embedding job means missing vectors. Build monitoring for data freshness, not just model accuracy.

Data Pipeline Monitoring Checklist

Production-grade recommendation systems need monitoring at every layer of the data pipeline, not just the model:

| Monitor | What to Track | Alert Threshold | Why It Matters |

|---|---|---|---|

| Event Ingestion | Kafka consumer lag, events/sec | Lag > 10 min or throughput drops 50% | Stale features lead to stale recommendations |

| Feature Freshness | Time since last Redis update per feature | Any feature > 1 hour stale | Old user embeddings miss recent behavior |

| Embedding Coverage | % of active users/items with valid embeddings | Coverage drops below 95% | Missing embeddings fall back to cold start |

| Model Prediction Drift | KL divergence between predicted and actual CTR distributions | KL > 0.1 over 24h window | Model is losing calibration |

| Serving Latency | P50, P95, P99 of inference time | P99 > 150ms | Users see loading spinners or timeouts |

| Null Feature Rate | % of inference requests with missing features | Any feature > 5% null | Signals a broken pipeline upstream |

Pro Tip: Set up a "data SLA dashboard" that shows feature freshness for each pipeline stage. When recommendations feel stale, the first question should be "is the data fresh?" — not "is the model wrong?"

15. A/B Testing: The Complete Guide

Deep Dive Available: For a comprehensive treatment of A/B testing fundamentals, statistical foundations, and practical implementation, see our dedicated article: A/B Testing Design and Analysis: How to Prove Causality with Data

"We shipped the model and engagement went up 5%!" means nothing without proper A/B testing. This section covers A/B testing specifically for recommendation systems.

Experiment Design

Random Assignment:

Users must be randomly assigned to control (A) or treatment (B). This ensures the only difference between groups is the change you're testing.

Bucket Assignment:

- User ID → Hash Function → Bucket (0-99)

- Buckets 0-49: Control (existing model)

- Buckets 50-99: Treatment (new model)

Sample Size Calculation:

Before running the test, calculate required sample size:

| Parameter | Typical Value | Impact |

|---|---|---|

| Baseline metric | e.g., 5% CTR | Higher baseline → fewer samples needed |

| Minimum Detectable Effect (MDE) | e.g., 2% relative lift | Smaller MDE → more samples needed |

| Statistical Power | 80% (standard) | Higher power → more samples needed |

| Significance Level | 5% (α = 0.05) | Lower α → more samples needed |

Rule of Thumb: For a 2% relative lift on 5% CTR with 80% power and 95% confidence, you need roughly 400,000 users per group.

What Netflix Does

Netflix shared their A/B testing philosophy:

-

Hypothesis First: Every test starts with a hypothesis: "Showing Top 10 will help members find content, increasing satisfaction."

-

Local + Global Metrics: They track both the specific feature metric (Top 10 clicks) AND global metrics (overall viewing hours). An improvement in local metrics that hurts global metrics is a red flag.

-

Holdout Groups: Even after shipping, Netflix maintains a small percentage of users on the old experience to measure long-term effects.

-

Skepticism of Large Effects: If a test shows 20% improvement, they're suspicious. Large effects often indicate bugs, not breakthroughs. They re-run such tests.

What Spotify Does

Spotify runs hundreds of experiments simultaneously:

-

Precise Sample Sizing: They calculate exact sample sizes to avoid underpowered tests that waste traffic without delivering answers.

-

Pre-Registration: Hypotheses and success metrics are documented before the test runs. This prevents cherry-picking positive results.

-

Minimum Duration: Tests run at least 1-2 weeks to capture weekly patterns (weekend vs weekday behavior differs).

-

Learning from Failures: Failed tests are documented. Understanding WHY a hypothesis was wrong often yields more insight than confirming it was right.

Common A/B Testing Mistakes

| Mistake | Why It's Wrong | How to Avoid |

|---|---|---|

| Peeking at results early | Multiple comparisons inflate false positive rate | Pre-commit to sample size; use sequential testing methods if peeking is necessary |

| Stopping at significance | p = 0.049 today might be p = 0.06 tomorrow | Wait for pre-calculated sample size |

| Ignoring segments | Overall neutral effect may hide +10% for new users, -5% for power users | Pre-register key segments; analyze heterogeneous effects |

| Only measuring short-term | A feature that boosts CTR might hurt 30-day retention | Include long-term holdouts; track delayed metrics |

| Network effects | User A's treatment affects User B's control (e.g., in social features) | Use cluster randomization (randomize by friend groups, not individuals) |

Metrics Hierarchy for Recommendations

Metrics Hierarchy:

| Level | Category | Metrics |

|---|---|---|

| North Star | Business | Monthly Active Users, Revenue |

| Primary | Engagement | Sessions, Watch Time, Clicks, Impressions |

| Primary | Satisfaction | Ratings, Surveys, Likes, Shares |

| Primary | Quality | Diversity, Freshness, Coverage, Creator Mix |

| Model | Offline | AUC, NDCG@K, Recall@K, Precision@K |

Interview Insight: When discussing A/B testing, mention the distinction between "guardrail metrics" (things that must not get worse, like app crashes) and "success metrics" (things you're trying to improve, like engagement). A test can improve success metrics but fail if it hurts guardrails.

16. Cost Estimation at Scale

Understanding the cost of recommendation infrastructure helps you make architectural decisions and defend them in interviews.

Reference Architecture Costs

For a system serving 100M DAU with 10M items:

| Component | Service | Scale | Estimated Monthly Cost |

|---|---|---|---|

| Event Ingestion | AWS MSK (Kafka) | 500GB/day, 50K events/sec | $3,000 - $5,000 |

| Feature Store | Redis Cluster | 100GB hot data, 100K QPS | $2,000 - $4,000 |

| Vector Database | Self-hosted Faiss on EC2 | 10M vectors, 50K QPS | $1,500 - $3,000 |

| Model Serving | Triton on GPU (g4dn.xlarge) | 50K QPS, P99 < 50ms | $5,000 - $10,000 |

| Training | SageMaker / EC2 GPU | Daily training, weekly full retrain | $3,000 - $8,000 |

| Interaction Logs | S3 + Athena | 500GB/day, 30-day retention | $500 - $1,000 |

| Metadata DB | RDS PostgreSQL | 10M items, high-read | $500 - $1,000 |

| CDN / API Gateway | CloudFront + API Gateway | 50K QPS | $1,000 - $2,000 |

Total Estimated Range: 17,000 - 37,000 USD/month

Cost Drivers and Optimizations

| Cost Driver | Optimization | Savings |

|---|---|---|

| GPU Serving | Quantization (FP32 → INT8), batching requests | 50-70% |

| Vector Search | Product Quantization (PQ) reduces memory 4-8x | 60-80% |

| Feature Store | Tiered storage (hot/warm/cold), TTLs on old data | 40-60% |

| Training | Spot instances, incremental training instead of full retrains | 50-70% |

| Storage | Parquet compression, lifecycle policies to Glacier | 60-80% |

Build vs Buy: Vector Database

| Option | 10M Vectors Cost | 100M Vectors Cost | Pros | Cons |

|---|---|---|---|---|

| Pinecone | ~$70/month (Starter) | $700+/month | Fully managed, easy | Expensive at scale, vendor lock-in |

| Self-hosted Faiss | ~$500/month (3x r5.xlarge) | ~$2,000/month | Full control, cheapest at scale | Operational burden |

| AWS OpenSearch | ~$300/month | ~$1,500/month | Managed, AWS integration | Not specialized for vectors |

| Milvus (Self-hosted) | ~$600/month | ~$2,500/month | Feature-rich, open source | Complex to operate |

Interview Insight: When asked about costs, don't just quote numbers—explain the trade-offs. "Pinecone is great for startups at ~70 USD/month, but at our scale of 100M vectors, self-hosted Faiss saves ~30K USD/month with ~2 engineer-weeks of setup. That's a clear ROI."

Scaling Economics

Sublinear Scaling (Good):

- Vector search: 10x more vectors ≠ 10x more cost (indexing amortizes)

- Model serving: Batching requests improves GPU utilization

Linear Scaling (Expected):

- Event ingestion: 10x more users = ~10x more events = ~10x cost

- Storage: 10x more logs = ~10x storage cost

Superlinear Scaling (Dangerous):

- Model complexity: Adding features can increase serving cost quadratically

- A/B testing: More experiments = more traffic splits = reduced power per test

17. Conclusion

Designing a recommendation system at scale is about managing the funnel. You start with millions of items, filter them down with fast approximate algorithms (retrieval), and then carefully rank the survivors with heavy deep learning models.

The Complete Picture:

This summary diagram shows all three layers working together at scale.

Data Layer (Left Column): The foundation that captures and stores all information:

- Kafka: Real-time event streaming (clicks, watches, scrolls)

- S3: Long-term storage for training data

- Redis: Feature Store for instant feature lookup

- PostgreSQL: Metadata for users and videos

ML Layer (Center Column): The intelligence that powers recommendations:

- Two-Tower Model: Fast retrieval of candidate videos (500 from 10M)

- DLRM (Deep Learning Ranking Model): Precise ranking of candidates

- Rules Engine: Business logic (diversity, freshness, content policy)

- Cold Start: Fallback strategies for new users/items

Serving Layer (Right Column): The infrastructure that delivers recommendations in <200ms:

- API Gateway: Authentication, rate limiting, request routing

- Load Balancer: Distributes traffic across servers

- Triton: GPU-optimized model inference

- Faiss: Sub-millisecond vector similarity search

The Flow:

- User request hits API Gateway

- Rec Service fetches user features from Redis

- Two-Tower model retrieves 500 candidates via Faiss

- DLRM ranks the 500 candidates

- Rules Engine applies business logic

- Top 10-20 videos returned to user

At Scale:

- 100M Daily Active Users

- 50,000 Queries Per Second

- <200ms P99 latency

- 10M videos in the catalog

Looking Ahead: LLMs Meet Recommendations

The next frontier in recommendation systems is the integration of Large Language Models (LLMs). Several trends are emerging:

LLMs as Feature Extractors: Instead of training custom content encoders, companies are using LLM embeddings (from models like GPT-4 or Gemini) to represent item content. These embeddings capture nuanced semantic meaning — a video about "beginner-friendly Python data visualization" and one about "matplotlib tutorial for newcomers" are understood as deeply similar, something traditional tag-based systems miss.

Conversational Recommendations: Platforms like Spotify ("DJ" feature) and Netflix are experimenting with chat-based interfaces where users describe what they want in natural language: "Something funny but not too silly, maybe a heist movie." An LLM interprets the intent and maps it to recommendation candidates.

Reasoning over User History: LLMs can analyze a user's watch history and generate explanations: "Because you watched three documentaries about space this week, you might enjoy this new series about the Mars mission." This combines recommendation with interpretability.

Where This Is Heading: Expect hybrid systems where traditional Two-Tower/DLRM models handle the high-throughput retrieval and ranking (50K QPS), while LLMs handle the "last mile" — re-ranking the final 20-50 items with richer contextual understanding, or powering conversational discovery features that complement the algorithmic feed.

Key Takeaways for Interviews

-

Always start with requirements. Clarify scale, latency, and cold start before drawing boxes.

-

Separate retrieval from ranking. This is the most important architectural decision. Retrieval prioritizes recall (fast, approximate). Ranking prioritizes precision (slow, accurate).

-

Don't forget cold start. New users and new items break collaborative filtering. Always have a fallback (popularity, content-based features, exploration).

-

Measure both offline AND online metrics. High offline NDCG doesn't guarantee high online watch time. Always A/B test.

-

Negative sampling matters. Without negatives, the model thinks everything is relevant. In-batch negatives are the industry standard.

The "secret sauce" isn't just the algorithm—it's the infrastructure that feeds it:

- Kafka for real-time data ingestion at 500GB/day.

- Vector Databases (Faiss) for sub-millisecond search over 10M embeddings.

- Feature Stores (Redis) to provide instant user context without querying raw logs.

- Model Serving (Triton) for low-latency GPU inference at 50K QPS.

Further Reading

To master the concepts powering these models:

- Model Evaluation: Read our guide on Cross-Validation vs. The "Lucky Split".

- Infrastructure: Check out AWS vs GCP vs Azure for Machine Learning.

- Metrics: Read Why 99% Accuracy Can Be a Disaster.

Practice Problems

-

Trending Now: Design a "Trending Now" section. Does it fit into the main funnel, or is it a separate cache?

-

Diversity vs. Relevance: How would you ensure users see content from at least 5 different categories? Where in the pipeline do you enforce this?

-

Creator Fairness: Small creators complain their videos never get recommended. How would you address this without hurting engagement metrics?

-

Real-Time Personalization: A user just watched 3 cooking videos in a row. How fast can you update their recommendations?

These questions test whether you understand not just the "what" but the "why" behind each architectural decision.